Write a Spec Your Agent Can Execute

Stop Describing Features in Chat. Start Writing Missions Your Agent Ships on the First Try

Before you spend another hour configuring n8n nodes, pause for a moment.

We know what it feels like to have the perfect workflow in mind — and then hit the setup wall.

Dragging nodes.

Reading API docs.

Testing connections.

Wondering if you’re doing this the “right way.”

After watching dozens of developers build the same workflows from scratch, we noticed something.

The hard part isn’t knowing what to automate. It’s the manual translation from idea to working n8n workflow.

That’s why we built Autom8n.

✨ From description to deployment in minutes

Tell us what you want to automate.

Get a complete n8n workflow — nodes configured, connections set, ready to deploy.

No more setup hell. No more node-by-node building.

Before you open another API doc or drag another node...

Hey there,

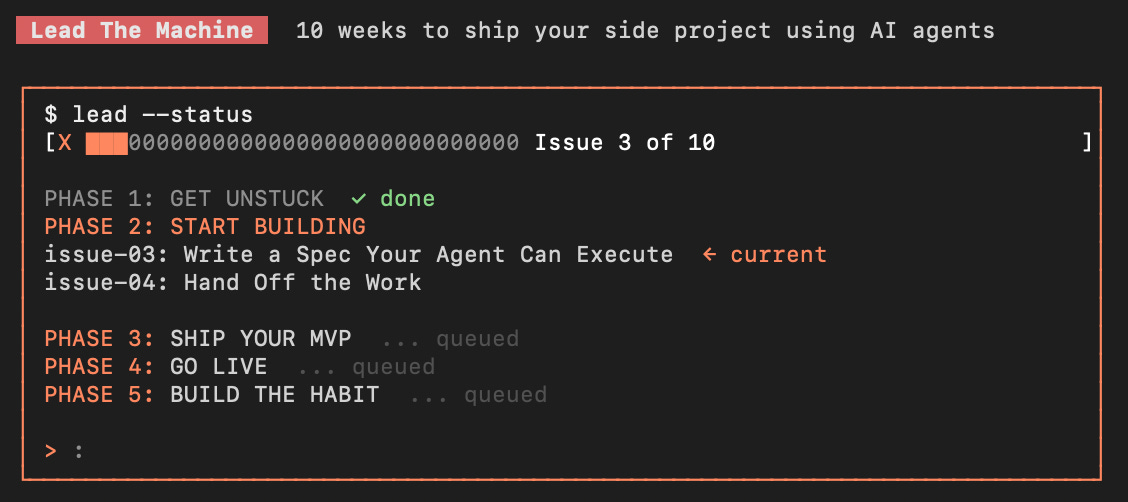

in the previous issues, we prepared the soil to Build Your Agent’s Brain and to Connect Your Agent to Real Tools.

Now that your agent understands your project, it can read your database, create files, and run migrations; the setup is done.

So you start building, and you type:

“Build me a setting page”

AI gets to work, five minutes later, you have a settings page. Amazing!

Then you open it and stare.

There’s a field for changing your username; you didn’t want that. Usernames come from the auth provider. There’s a dark mode toggle that saves to localStorage instead of your database; a “Delete Account” button with a confirmation modal that doesn’t actually delete anything; and a full-page layout when you wanted a sidebar panel. Plus, there’s also a toast notification library AI installed without asking.

Let’s count the interpretation decisions AI made on “build me a settings page”:

What settings to include, AI guessed: username, email, dark mode, delete account

Where settings are stored, AI guessed: a mix of localStorage and database

What the layout looks like, AI guessed: full-page centered form

How save works, AI guessed: submit button with toast notification

What happens on success, AI guessed: toast + stay on page

What happens on error, AI guessed: inline error messages

Which components to use, AI guessed: built its own from scratch

What new dependencies to add, AI guessed: a toast library

Where to put the files, AI guessed: right folder, but four new files you didn’t expect

What validation to apply, AI guessed: email format, username length

Yes, ten interpretation decisions from five words: each one was reasonable in isolation; together, they produced something that looks like a settings page but isn’t your settings page.

You don’t have a coding problem; you simply have a communication problem. And “be more specific in chat” isn’t the fix, because you’ll forget details, AI will still fill gaps, and you’ll end up in a back-and-forth that takes longer than writing the spec properly once.

The blocker: You describe features in conversation, and AI interprets every ambiguity. The result works, but it isn’t what you wanted. You redo it or spend 30 minutes fixing decisions AI shouldn’t have made.

What changes today: You learn two things; first, how to write structured specs that eliminate interpretation for individual features. Second, how to create reusable skills that teach your agent how to handle entire categories of work, so you never repeat yourself.

The transformation: 10 interpretation gambles -> 0 guesses, exact output.

The Blocker: Conversation Is a Lossy Format

Chat is great for exploration, but it’s extremely terrible for execution.

When you describe a feature in conversation, you’re transmitting a mental picture through words: you see the settings page in your head. You know it’s a sidebar panel with three fields that save to Supabase. But you said “build me a settings page” because the rest felt obvious.

It’s not obvious to AI, every detail you don’t specify becomes an interpretation. AI doesn’t flag interpretations; it just picks one and builds on it. You only find out what it chose when you see the result.

This is why “be more detailed” doesn’t fully solve the problem; even detailed chat descriptions have gaps:

“Build me a settings page with display name, email notifications toggle, and timezone selector in a sidebar panel that saves to the user_preferences table”

is way better, but it still doesn’t specify: what component library? What happens during save? What does the loading state look like? What are the field defaults?

The issue isn’t your level of detail; it’s in the format. Conversation is freeform, and AI fills freeform gaps with interpretations. You need a format that has no gaps.

The Fix: Two Layers of Instructions

Today, we’re building two things that work together:

Specs: one-off mission briefs for individual features. “Build this specific settings page with these exact fields and this exact behavior.” You write a new spec for each feature.

Skills: reusable instruction packages for categories of work: “Whenever you build a new page, use this layout. Whenever you create an API endpoint, follow this pattern.” You write a skill once, and your agent applies it automatically every time it encounters that type of task.

Think of it this way: a spec is a mission order for one job, a skill is training that applies to every job of that type.

Together, they eliminate interpretation at both levels: the skill handles repeatable decisions, and the spec handles feature-specific ones.

Nothing is left to guess.

Part 1: Specs That Leave Nothing to Interpret

A spec is a structured document that answers every question AI would otherwise guess at. Not a product requirements doc, not a user story. A mission brief for your agent. Think of the difference between these:

“Go to the store and get dinner supplies.”

vs.

“Go to Trader Joe’s on 5th. Get: salmon filet (wild, not farmed), one lemon, asparagus bundle, olive oil (only if we’re below half bottle). Budget: under $30. If they’re out of salmon, get cod instead. Don’t buy anything else.”

Same task: the first version requires 10 judgment calls; the second, 0. The result of the second is predictable.

That’s what a spec does for AI, it turns a feature request into a set of instructions with no room for interpretation.

The Spec Format

Five sections, each one eliminates a category of guessing.

Section 1: What Exists, tell AI what’s already built that this feature connects to.

## Context

- User settings currently don't exist — this is new

- User data lives in the `profiles` table (id, display_name, email, timezone, notification_prefs)

- App uses the sidebar layout pattern from /components/layouts/sidebar-layout.tsx

- Existing form components: TextInput, Toggle, Select (in /components/ui/)

This eliminates the need to guess which components exist and where things go.

Section 2: What to Build, not “what the feature does”, what AI should actually create.

## Requirements

1. Create /app/settings/page.tsx using the SidebarLayout component

2. Three fields only:

- Display name (TextInput, pre-filled from profiles.display_name)

- Email notifications (Toggle, pre-filled from profiles.notification_prefs.email)

- Timezone (Select, pre-filled from profiles.timezone, options from Intl.supportedValuesOf('timeZone'))

3. Save button that updates the profiles table via existing Supabase client

4. On save: disable button, show "Saving..." text on button, re-enable on success

5. On error: show error text below the form in red, keep form editable

Every decision is already taken; AI just builds.

Section 3: What NOT to Build, AI wants to be helpful. It will add features you didn’t ask for unless you explicitly say not to.

## Constraints

- Do NOT add fields beyond the three listed above

- Do NOT install any new dependencies

- Do NOT create a toast notification system

- Do NOT add a delete account option

- Do NOT build custom form components — use existing ones from /components/ui/

- Do NOT modify any existing files except to add the settings route

Section 4: How to Verify, tell AI how to check its own work.

## Acceptance

- [ ] Settings page loads at /settings with pre-filled user data

- [ ] Changing display name and saving updates the profiles table

- [ ] Toggle changes notification_prefs.email in the database

- [ ] Timezone selection persists after page reload

- [ ] No new dependencies in package.json

- [ ] No new files outside /app/settings/ and no modified existing files

Section 5: Edge Cases, name them so AI doesn’t invent its own handling.

## Edge Cases

- If Supabase update fails, show the error message from the response — don't retry automatically

- If user has no timezone set, default to "UTC"

- If display_name is empty on save, show "Display name is required" and don't submit

The Complete Spec Template

Copy this, fill in the brackets, then save it in your project (e.g., /docs/specs/feature-name.md):

# Feature: [NAME]

## Context

- [What already exists that this connects to]

- [Which tables/APIs are involved]

- [Which existing components/patterns to reuse]

- [Any relevant file paths]

## Requirements

1. [Specific file to create or modify]

2. [Specific behavior — what it does, not what it "should" do]

3. [Specific UI elements with exact interactions]

4. [Specific data flow — where data comes from, where it goes]

5. [Specific states — loading, success, error]

## Constraints

- Do NOT [thing AI might add that you don't want]

- Do NOT [dependency it might install]

- Do NOT [file it might modify]

- Do NOT [pattern it might invent]

## Acceptance

- [ ] [Verifiable condition 1]

- [ ] [Verifiable condition 2]

- [ ] [Verifiable condition 3]

- [ ] [No unintended side effects condition]

## Edge Cases

- If [condition], then [specific behavior]

- If [error], then [specific handling]

Five sections: everyone eliminates a category of interpretation. The spec takes 5-10 minutes to write, and it saves 30+ minutes of rework per feature.

Part 2: Skills, Teach Your Agent Once, Apply Forever

The spec template handles individual features, but what about tasks you repeat?

Every project has patterns that come back again and again: creating a new page, adding an API endpoint, building a form, setting up a new database table. Each time, you’d need to write a spec that repeats the same structural decisions: “use these components, follow this layout, save to the database this way.”

That’s where skills come in, and they’re more powerful than just “a list of patterns.”

What a Skill Actually Is

A skill is a self-contained folder of instructions, scripts, and resources that your agent dynamically loads when it encounters a specific task type. It’s not a doc you paste into chat. It’s a package your agent discovers and triggers on its own.

The key difference:

A spec says: “Build this specific settings page with these exact fields.”

A skill says: “Whenever you need to create any new page in this project, here’s exactly how to do it: the layout, the components, the data-fetching pattern, the file naming.”

You write a skill once. Your agent reads the description, recognizes when a task matches, and loads the full instructions on demand. You don’t tell it “use this skill.” It decides.

Skill Structure

A skill is a folder with one required file and three optional directories:

create-page/

├── SKILL.md ← Required: frontmatter + instructions

├── scripts/ ← Optional: executable code for deterministic tasks

├── references/ ← Optional: docs loaded into context when needed

└── assets/ ← Optional: templates and files used in output

The SKILL.md file has two parts: the frontmatter (YAML at the top) tells the agent what this skill is and when to use it, and the body (markdown below) tells the agent exactly what to do when the skill triggers.

Here’s a real skill for a side project:

---

name: create-page

description: Use this skill whenever creating a new page or route in the application.

Triggers when the user asks to add a new page, create a new view, build a new screen,

or add a new route. Also use when modifying page layout structure or adding navigation items.

---

# Create Page

When creating a new page in this project, follow these steps exactly.

## File Creation

1. Create the page file at /app/[route-name]/page.tsx

2. Use SidebarLayout from /components/layouts/sidebar-layout.tsx as the page wrapper

3. Page is a server component by default — only add "use client" if interactive state is needed

## Data Fetching

- Fetch in the server component using the Supabase server client from /lib/supabase-server.ts

- Pass data to client components as props — never fetch from Supabase in client components

- Loading state: use the Skeleton component from /components/ui/skeleton.tsx

## Navigation

- Add the route to the nav array in /components/nav/sidebar-nav.tsx

- Format: { label: "Page Name", href: "/route-name", icon: LucideIconName }

## Components

- Use only components from /components/ui/ — do NOT build new base components

- If a component doesn't exist, ask before creating one

## Constraints

- Do NOT create layout components — use existing SidebarLayout

- Do NOT install new dependencies for page structure

- Do NOT create loading.tsx or error.tsx unless explicitly asked

When your agent sees “build me a dashboard page,” it reads the description, recognizes this is a “new page” task, loads these instructions, and follows them. Every page in your project is built the same way: correct layout, correct data fetching, correct components, without you having to mention any of it in the spec.

The Description Is the Trigger

The frontmatter description is the most important line in the whole skill. It’s how your agent decides whether to activate the skill. Write it like you’re telling a colleague when to apply this knowledge.

Be specific and slightly pushy: instead of:

description: How to create pages in this project.

Write:

description: Use this skill whenever creating a new page or route in the application.

Triggers when the user asks to add a new page, create a new view, build a new screen,

or add a new route. Also use when modifying page layout structure or adding navigation items.

The more contexts you list, the more reliably the skill triggers when it should. Agents tend to under-trigger; they won’t use a skill unless the description clearly matches. Make it obvious.

The Three Resource Types

Most skills start with just a SKILL.md. Add these as you need them:

scripts/ This is the most powerful resource type, and most people underestimate it. Scripts give your agent direct CLI access to your tools, and that often beats MCP.

In Connect Your Agent To Real Tools, you connected MCP so your agent could talk to Supabase, Vercel, and other services through a protocol layer. That works, but CLI tools are better established, more stable, and more capable. The Supabase CLI can do things the MCP server can’t; the Vercel CLI handles edge cases that the MCP integration hasn’t implemented yet. Git, npm, Docker, AWS, every mature tool has a CLI that’s been battle-tested for years.

A skill with scripts turns your agent into something that doesn’t need you, or MCP, as a middleman for execution: it just runs the command.

deploy-preview/

├── SKILL.md

└── scripts/

└── deploy.sh

Your SKILL.md references it:

## Deploy

Run the deploy script to create a preview deployment:

`bash {baseDir}/scripts/deploy.sh [branch-name]`

The script handles:

- Building the project

- Running pre-deploy checks

- Deploying to Vercel preview

- Returning the preview URL

And scripts/deploy.sh is a straightforward shell script:

#!/bin/bash

BRANCH=${1:-$(git branch --show-current)}

npm run build && npx vercel --yes --meta branch=$BRANCH

The agent runs this directly; the script doesn’t get loaded into context, it just executes. No MCP server running in the background, no protocol overhead, no connection to maintain. Just a CLI command your agent triggers when the skill fires.

This is where skills go beyond everything we’ve covered so far. PROJECT.md gives your agent knowledge; MCP provides protocol-based access. Skills with scripts give it autonomous execution, deterministic, reliable actions that run the same way every time, using the same CLI tools you’d use yourself.

references/ Documentation the agent reads when it needs details beyond what’s in SKILL.md. Keep SKILL.md focused; put deep specifics in reference files.

## Error Handling

For error response patterns and status codes, read `references/error-handling.md`.

The agent loads that file only when it reaches the error-handling step, not every time the skill triggers.

assets/ Templates and static files the agent copies or references by path. These never get loaded into context.

## Starting Template

Copy `assets/page-template.tsx` to the new route directory, then modify per the requirements.

Building Your First Skills

Start with the patterns that cost you the most rework. For most side projects, three skills cover 90% of the repetition:

create-page/ How pages work (layout, data fetching, navigation, components).

create-api/ How API endpoints work (file location, validation, error format, response structure).

create-form/ How forms work (client component, validation, submission, loading/error/success states).

Put them in your project:

.claude/skills/ ← Claude Code

.cursor/skills/ ← Cursor (check your version's docs)

.claude/

└── skills/

├── create-page/

│ └── SKILL.md

├── create-api/

│ └── SKILL.md

└── create-form/

└── SKILL.md

You don’t need scripts, references, or assets to start. A SKILL.md with frontmatter and clear instructions is enough; Add the other directories when you need them, a CLI deploy script, a reference doc for complex error handling, and a template file for boilerplate.

The skills grow with your project.

The Prompt: Map Your Agent’s Full Reach

Your database is connected; now you need to know what else your agent can and can’t touch. This prompt audits your entire setup and tells you exactly what to connect next:

You are a DevOps engineer who specializes in fast, minimal infrastructure for solo developers.

Your job: Audit my current project setup and tell me exactly which MCP connections I need to ship my MVP without being the copy-paste relay for every action.

Context:

- Read my PROJECT.md for project details and tech stack

- Read my current MCP configuration (check .mcp.json or equivalent)

- Check what tools and services my project already depends on

Tasks:

1. List every external service my project depends on (database, hosting, auth, payments, etc.)

2. For each service, report:

- MCP server available? (yes/no, with package name if yes)

- Priority: CRITICAL (blocks shipping), USEFUL (saves significant time), or LATER (nice to have)

- One-line setup instruction if available

3. Generate an updated .mcp.json that adds all CRITICAL connections to my current config

4. For any service without an MCP server, give me the fastest workaround (CLI tool, API key in env, direct SDK)

Rules:

- Only recommend connections that directly help me ship my MVP faster

- No experimental or unstable MCP servers — only official or well-maintained community packages

- If something takes more than 10 minutes to set up, flag it and explain why it's still worth it

- Prioritize based on what I'll actually use in the next 2 weeks, not theoretical completeness

- Do NOT suggest services I'm not already using

Output format:

1. Connection audit as a table (service | MCP available | priority | setup)

2. Updated .mcp.json file I can copy directly into my project

3. Ordered checklist: what to connect next and in what sequenceAfter running this, you’ll have a complete picture: what your agent can reach, what’s still blocked, and exactly what to do about it: in priority order.

Common Objections (And Why They’re Wrong)

“Writing a spec takes longer than just telling AI what I want.”

The spec takes 5-10 minutes. Reworking a wrong output takes 30, and most of the time, running the prompt above generates 80% of your spec. You edit the remaining 20%, that’s 3 minutes of work.

“My feature is too simple to need a spec.”

If the feature has fewer than three interpretation decisions, you’re right, just describe it in chat. But if you’re saying “it’s simple” and then spending 15 minutes fixing AI’s output, it wasn’t simple. It just felt simple because you knew what you meant.

“Creating skill folders feels like over-engineering for a side project.”

The first time AI builds a page with the wrong layout, you’ll write a create-page skill. That takes 10 minutes. From that point on, every page in your project is built correctly, automatically, without you ever mentioning layout in any spec again. The skill compounds across every future feature. The time you spend not building skills is time you spend repeating yourself.

“I don’t use Claude Code. Does this still work?”

Specs work with any tool. Skills in the folder format work natively with Claude Code and Cursor. If your tool doesn’t support skill folders yet, you can put the skill instructions in your PROJECT.md or paste them when relevant; the concepts still apply. But the automatic triggering based on the description is the real power, and right now, Claude Code’s .claude/skills/ directory is the fastest path to that.

Here’s what you learned today:

Conversation is a lossy format for execution. Every ambiguity becomes an interpretation; AI doesn’t flag interpretations, it just picks one and builds.

Specs eliminate interpretation for individual features. Five sections: Context, Requirements, Constraints, Acceptance, Edge Cases, cover every decision AI would otherwise guess at.

Skills eliminate interpretation for repeatable patterns. A skill is a self-contained folder with a SKILL.md that your agent discovers and triggers automatically. Write it once, apply it forever.

Skills with scripts go beyond MCP. Bundled shell scripts give your agent direct CLI access to your tools, providing a more mature, more reliable, and more capable alternative to protocol-based connections. Your agent doesn’t just write code: It builds, deploys, and runs commands autonomously.

The prompt generates specs that work with your skills. It reads your project and existing skills, then produces a spec covering only what’s unique, without repeating what your skills already handle.

Your agent has a brain (PROJECT.md), hands (MCP), and now two layers of instructions: skills for repeatable patterns and specs for individual features.

The setup is complete.

Next week, we stop giving AI instructions one at a time and start handing off entire builds. You’ll write the spec, kick off the agent, and walk away: checking at milestones, not every line. The skill isn’t coding anymore, it’s knowing when to let go.

Issue 04: Hand Off the Work.

But first: pick one feature you’re about to build and write the spec using the template. If you’ve already built a few pages and found yourself correcting the same layout decisions, create your first skill folder; then build the feature and compare the output to what you usually get from a chat description.

That’s the moment you stop directing keystrokes and start directing outcomes.

Now go write your first spec.

See ya next week,

— Ale & Manuel

PS... If you’re enjoying ShipWithAI, please consider referring this edition to a friend.

And whenever you are ready, there are 2 ways I can help you:

1. AI Side-Project Clarity Scorecard (Discover what’s blocking you from shipping your first side-project)

2. NoIdea (Pick a ready-to-start idea created from real user problems)

The difference between a spec and a prompt took me longer to understand than it should have.

Specs reduce ambiguity in advance. Prompts leave ambiguity for the agent to resolve at runtime. With autonomous agents running overnight, runtime ambiguity turns into three hours of wrong direction before you wake up and notice.

The reusable 'skills' concept is something I've independently landed on too - I call them CLAUDE.md files per context. You're teaching the agent patterns for entire categories of work rather than individual tasks. That's what makes scale possible.

The spec-first workflow changed how I think about delegation: https://thoughts.jock.pl/p/building-ai-agent-night-shifts-ep1