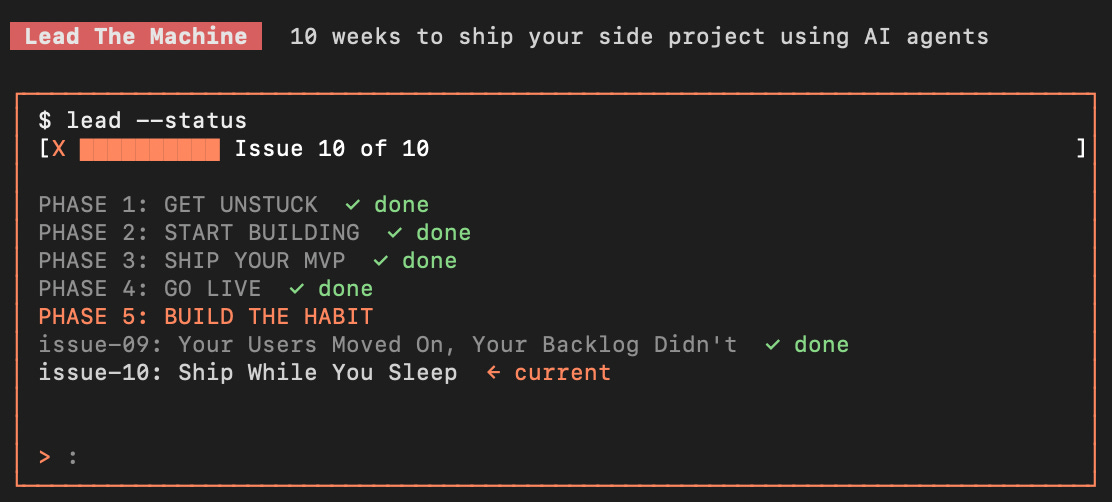

Ship While You Sleep

Your Agent Doesn't Need Breaks. You Only Build When You're Awake. Fix That.

Hey there,

Let’s count the hours.

On a normal weekday, you get maybe 2 hours of focused build time for your side project. Morning commute, lunch break, a bit after dinner if you’re not too tired. Add weekends, and you’re at ~15 hours per week of actual coding.

Your agent? It can work 168 hours per week. It doesn’t need breaks, and it doesn’t lose focus. It doesn’t switch contexts between work calls and family time. Every hour your agent isn’t building is an hour you’re not shipping.

The sleeping hours alone — 8 hours × 7 nights = 56 hours per week of wasted capacity. That’s 3.7x your current focused build time: gone, every week.

Now here’s the uncomfortable part: you already have everything you need to capture those hours

A spec format that the agent can execute without you (Issue 03)

Skills with deterministic scripts that survive context resets (Issues 05-09)

Parallel build expertise (Issue 09)

A reference to Ralph, Claude Code’s native loop, and other autonomous runners (Issue 09’s

parallel-tools.md)

The missing piece isn’t technology, it’s trust. You don’t trust the agent alone overnight because you’ve never built that trust, and the way to build trust is to try, fail small, learn, and try again with tighter guardrails.

The blocker: You only ship when you’re awake and the agent is idle for 56 hours a week. You’ve never run an autonomous overnight loop because the failure mode, waking up to 8 hours of corrupted code, feels catastrophic.

What changes today: You install a skill that handles the pre-sleep ritual (guardrails, kill conditions, pre-flight checks) and the morning ritual (structured review, outcome categorization, cleanup). The actual overnight loop is handled by Ralph. Your first run is a 1-hour “nap run” with 1 spec; you build trust incrementally.

The transformation: Your agent works while you sleep, You wake up to a structured report, not a mystery. Some nights ship features, others surface issues. Over time, your overnight success rate climbs from 30% to 70%+ as your guardrails tighten to your specific codebase.

Yesterday, building an n8n workflow meant opening the docs, dragging nodes, debugging connections, and hoping it would work.

Today it means: describing what you want in plain English and getting a complete, ready-to-deploy workflow in minutes, with a full guide so you actually understand what’s running.

Autom8n just went live.

The gap between “I have an automation idea” and “it’s running in production” just got a lot smaller.

Being Brutally Honest: This Is the Hardest Issue

I need to say this clearly before going further: autonomous overnight runs fail more often than any other technique in this series.

Parallel builds (Issue 09) succeed maybe 80% of the time. Deploy sessions (Issue 07) succeed 90%+. Launch days (Issue 08) always produce something. Overnight runs on your first few attempts? Expect 30-40% success rate.

The failure modes are specific:

The agent gets stuck in a loop. Tests fail, the agent tries to fix, and the same error occurs. Then tries again, same error. 3 hours later, no progress, but the branch has 15 junk commits.

The agent drifts out of scope. A “simple CSS fix” turns into modifying the auth middleware because the agent followed a tangential lead. You wake up to files that you never authorized.

The agent makes subtle correctness errors. Code compiles, tests pass, but the logic is wrong. The spec was ambiguous, and the agent picked the wrong interpretation, which you find out days later when a user reports a bug.

Nothing happens. Pre-flight check failed silently, the loop never started, and you woke up to an unchanged repo.

These aren’t edge cases, they’re the default experience on runs 1-3. The goal of your first overnight runs isn’t to ship code, it’s to discover what your specific project needs in its guardrails. Treat the first 3-5 runs as calibration, expect them to fail, then learn from each one.

After calibration (usually 5-10 runs in), the success rate climbs sharply. Developers who stick with this report 60-80% success rates on overnight runs for well-defined bug fixes and small features, but never 100%. Never for ambiguous specs, never for novel integrations.

Set expectations accordingly. This is a multi-week skill to develop, not a button to press tonight.

The Skill: night-shift

This skill handles the two human rituals around autonomous runs: preparation before bed and review in the morning. The actual overnight loop is Ralph’s job (from Issue 09’s parallel-tools.md). Our skill wraps Ralph with safety checks and review structure.

night-shift/

├── SKILL.md ← PREPARE and REVIEW modes

├── scripts/

│ ├── check_sleep_ready.py ← Pre-flight: git clean, tests pass, specs valid

│ └── morning_review.py ← Analyzes commits, detects stuck patterns, categorizes outcome

├── references/

│ ├── guardrails.md ← Scope, kill conditions, budget patterns

│ └── ralph-integration.md ← Ralph setup, spec → prd.json conversion

└── assets/

└── night-shift-checklist.md ← Pre-sleep + morning review template

Install it:

git clone https://github.com/shipwithai/skills.git /tmp/shipwithai-skills

cp -r /tmp/shipwithai-skills/night-shift .claude/skills/night-shift

rm -rf /tmp/shipwithai-skills

# Verify:

ls .claude/skills/night-shift/

# SKILL.md scripts/ references/ assets/

You also need Ralph installed. Follow the setup in references/ralph-integration.md — The short version is /plugin marketplace add snarktank/ralph in Claude Code.

When and How the Skill Triggers

PREPARE mode triggers when you say things like:

“I want to set up an overnight build”

“Prepare for a night run”

“Run this overnight”

“Let the agent work on this while I sleep”

REVIEW mode triggers when you say things like:

“Review last night’s work”

“What happened overnight?”

“Did the overnight build succeed?”

“Check the night-shift run”

The Pre-Sleep Ritual (PREPARE mode)

Tonight is your first attempt, one spec ready, a UX fix that rewords a confusing button label across 3 files. Small, well-scoped, low-risk. Perfect calibration run.

Open Claude Code and type:

I want to try my first overnight run. Use the fix-rename-button spec.

Make it a nap run — 1 hour max.Step 1: Reality Check (30 seconds)

The skill asks:

First overnight run? [yes]

How many specs? [1]

How long is the nap run? [1 hour]

Is git committed? [yes / no]

You confirm yes, 1, 1 hour, yes. If you’d said 5 specs or 8 hours, the skill would push back hard: “First overnight runs are MAX 1 spec and 1-2 hours. Start smaller, build trust incrementally.”

Step 2: Pre-Flight Check (1 minute)

The skill runs scripts/check_sleep_ready.py --specs /specs/fix-rename-button.md --first-run:

{

"checks": {

"git_clean": { "pass": true, "reason": "Clean working tree" },

"branch": {

"pass": true,

"reason": "On safe branch: night-shift/20260407"

},

"tests": { "pass": true, "reason": "Tests pass (npm test)" },

"typecheck": { "pass": true, "reason": "Typecheck passes" },

"specs": [

{

"pass": true,

"spec": "/specs/fix-rename-button.md",

"critical_areas": []

}

]

},

"blockers": [],

"warnings": [],

"ready": true

}

If anything fails (dirty git, failing tests, spec missing a section, file referenced in blocked paths), the skill stops and tells you what to fix. No negotiation.

Step 3: Guardrail Generation (2 minutes)

The skill reads references/guardrails.md and generates guardrails for this specific run:

GUARDRAILS FOR THIS RUN:

Allowed scope:

/components/ui/primary-button.tsx

/components/ui/primary-button.test.tsx

/app/forms/[id]/page.tsx

Blocked (hard refuse):

/app/api/auth/**

/prisma/migrations/**

.env*

package.json (dependencies section)

middleware.ts

Kill conditions:

• Tests fail and can't be fixed in 2 iterations → abort

• Same error message × 3 → stuck loop, abort

• Files modified outside allowed scope → drift, abort immediately

• Any new dependency added → scope creep, abort

• More than 5 files modified → scope creep (strict for first run)

• Commit count > 9 → too many retries, abort

Budget:

Max iterations: 3 (first-run strict)

Wall-clock: 1 hour

File limit: 5You review: the guardrails look tight, maybe too tight, but for a first run, tighter is better. A false abort is much cheaper than drift.

Step 4: Ralph Configuration (1 minute)

The skill converts your Issue 03 spec into Ralph’s prd.json format. That’s what you see:

{

"branchName": "night-shift/20260407-rename-button",

"maxIterations": 3,

"userStories": [

{

"id": "fix-rename-button",

"priority": 1,

"title": "Rename confusing button label",

"context": "The 'Complete' button on the form page confused 3 of 4 testers. They thought it meant 'mark as done' rather than 'submit the form'.",

"requirements": [

"Rename button label from 'Complete' to 'Submit Form'",

"Update the tooltip to say 'Send your response'",

"Update the test that checks the button label"

],

"tests": [

"Button renders with text 'Submit Form'",

"Tooltip contains 'Send your response'",

"Existing click behavior unchanged",

"Existing test passes"

],

"passes": false

}

]

}

The skill also generates /.night-shift/run.sh (the start script) and /.night-shift/kill.sh (the abort switch).

Step 5: Final Briefing (30 seconds)

The skill presents:

NIGHT SHIFT READY

Configuration:

Spec: fix-rename-button (1 spec)

Max iterations: 3

Max runtime: 1 hour

Scope: 3 files under /components/ui/ and /app/forms/

Guardrails:

• Hard blocks on auth, migrations, env, dependencies

• Abort on any scope violation

• Abort on >5 files modified

• Abort on test failure after 2 retries

To start the loop:

bash /.night-shift/run.sh

To abort if needed:

bash /.night-shift/kill.sh

In the morning (or in 1 hour), say:

"review last night's work"

Ready. Go take a nap or go to sleep.

You do: Run bash /.night-shift/run.sh. Then close your laptop and go to sleep.

The skill does NOT auto-start the loop; the human confirms the launch. This is deliberate; there should always be one human action between “configured” and “running.”

The Morning Ritual (REVIEW mode)

When you wake up, it’s 7 AM, open Claude Code and type:

Review last night's work.Step 1: Load State (10 seconds)

The skill looks for /.night-shift/prd.jsonprogress files and git log. Confirms the loop actually ran.

Step 2: Run Analysis (30 seconds)

The skill runs scripts/morning_review.py --since "8 hours ago" --allowed-scope /components/ui /app/forms --run-tests:

Here’s what the report can look like across the four possible outcomes. The one you wake up to depends on how the run went.

Outcome A — SHIPPED ✅

OVERNIGHT REPORT: SHIPPED ✅

SPEC:

✅ fix-rename-button — 3 commits, all acceptance criteria met

TIMELINE:

11:15 PM — Loop started

11:23 PM — Button label updated

11:24 PM — Tooltip updated

11:27 PM — Test updated and passing

11:28 PM — Loop completed successfully

FILES MODIFIED (in scope):

/components/ui/primary-button.tsx

/components/ui/primary-button.test.tsx

/app/forms/[id]/page.tsx

FILES MODIFIED (out of scope): none ✅

TESTS:

Before: 47 passing

After: 47 passing ✅

KILL CONDITIONS TRIGGERED: none

AGENT LEARNINGS (progress.txt):

"Button label was straightforward to rename. Tooltip required updating

the translation file too but that was in scope. Test file needed the

new label string — updated and re-ran."

RECOMMENDED NEXT STEPS:

1. Review the diff: git diff main..night-shift/20260407-rename-button

2. Merge when verified

3. Deploy (Issue 07)

Then do: review the diff, it’s clean. Merge, deploy. Total morning time spent: 5 minutes. The agent spent 13 minutes on work that would have taken you 15-20 minutes during the day.

Outcome B — PARTIAL ⚠️

You had 2 specs in a later run. One worked, one didn’t:

OVERNIGHT REPORT: PARTIAL ⚠️

SPECS:

✅ feature-response-analytics — 8 commits, tests passing

⚠️ fix-mobile-form — 3 commits, stuck in retry loop, aborted

TIMELINE:

11:03 PM — Loop started

11:45 PM — feature-response-analytics completed ✅

11:47 PM — Started fix-mobile-form

01:22 AM — Tests failing, iteration 2 of 3

02:10 AM — Tests failing, iteration 3 of 3

02:10 AM — KILL CONDITION: tests failed twice, aborted

FILES MODIFIED (in scope): 6 files

FILES MODIFIED (out of scope): none ✅

TESTS:

Before: 47 passing

After: 52 passing, 3 failing (in mobile form tests)

AGENT LEARNINGS (progress.txt):

"The mobile form test expects a prop 'onSubmitComplete' but the spec

only mentions 'onSubmit'. Tried adding both, tests still fail because

the test mocks the prop differently than I'm defining it..."

RECOMMENDED NEXT STEPS:

1. Merge feature-response-analytics (clean, ready to deploy)

2. Revert fix-mobile-form commits (agent got stuck)

3. The spec was ambiguous about the prop contract — rewrite with explicit

prop definitions

4. Try fix-mobile-form again in the next overnight run

Want me to do the merge and revert now?

Then you do: Confirm, the skill runs the merges and reverts. You rewrite the mobile form spec with explicit prop definitions. Tomorrow night, you try it again. Total morning time spent: 10 minutes. You shipped one feature overnight and learned one spec pattern to avoid.

Outcome C — STUCK 🔄

OVERNIGHT REPORT: STUCK 🔄

SPEC:

🔄 fix-mobile-form — 2 commits, then stuck

TIMELINE:

11:03 PM — Loop started

11:12 PM — First attempt, test failing

11:18 PM — Second attempt, same error

11:24 PM — Third attempt, same error

11:24 PM — KILL CONDITION: same error × 3, aborted

STUCK PATTERN DETECTED:

Same error message repeated 3 times:

"TypeError: Cannot read property 'submit' of undefined"

AGENT LEARNINGS (progress.txt):

"I keep getting the same error. The test imports the component from

a path I can't see in the repo. I tried 3 different fix approaches,

all produced the same error."

RECOMMENDED NEXT STEPS:

1. The test file has an import the agent couldn't resolve

2. Open the test file manually, check the import path

3. The spec didn't mention this dependency — update it

4. Revert the 2 commits: git reset --hard HEAD~2

Then you do: Check the test file, the import is from a custom hook in a non-standard location. The spec didn’t mention it. You update the spec with the import context. Tomorrow night’s run succeeds in 4 minutes. Total morning time spent: 5 minutes + spec update. You learned: specs for tests need to include relevant import paths.

Outcome D — DRIFTED 🚨

This is the scary one:

OVERNIGHT REPORT: DRIFTED 🚨

⚠️ SCOPE VIOLATIONS DETECTED

FILES MODIFIED (out of scope):

/app/api/auth/session/route.ts ← BLOCKED PATH

/middleware.ts ← BLOCKED PATH

/components/layout/nav.tsx ← not in allowed scope

TIMELINE:

11:03 PM — Loop started

11:15 PM — First commit OK (in scope)

11:22 PM — Second commit OK (in scope)

11:38 PM — Third commit TOUCHED auth/session — violated scope

11:38 PM — KILL CONDITION: scope violation, aborted

RECOMMENDED ACTION:

RESET. Do not try to salvage individual commits.

git reset --hard $(cat /.night-shift/checkpoint)

Something in the spec led the agent toward auth code even though the

spec wasn't about auth. Figure out what before trying again.

Then you do: Reset, read the original spec, realize it mentioned “when the user is logged in” as context — the agent interpreted this as requiring a modification to the auth logic. You rewrite the spec to use “when the user has an active session” and explicitly note “do not modify any auth code.” The next run succeeds. Total lost time: 1 hour of agent work thrown away, 15 minutes of your morning to diagnose and fix the spec.

This is the worst case; it’s annoying, but the kill condition triggered at the right moment. Without the guardrails, the agent could have spent 7 more hours modifying the auth code before you woke up. Instead, it aborted at 11:38 PM and sat idle until morning.

Starting Small: The Trust Curve

Your trust in overnight runs builds through phases. Skipping phases is the #1 reason people abandon the technique.

Phase 1: Nap Runs (first 3-5 attempts)

1 spec, maximum

1 hour runtime, maximum

Specs must be SIMPLE (rename, reword, one-file fix)

You run them during the day, not overnight — set the timer, come back in an hour

Goal: discover what guardrails your specific codebase needs

Expected success rate: 30-50%

Phase 2: Half-Night Runs (attempts 5-15)

2-3 specs, maximum

3-4 hours runtime, maximum

You run them overnight, but on nights you can afford to wake up and diagnose

Mix simple and medium specs

Goal: build a library of proven guardrail patterns

Expected success rate: 50-70%

Phase 3: Full-Night Runs (attempts 15+)

3-5 specs, maximum (more is usually too much)

6-8 hours runtime, maximum

You trust the kill conditions enough to sleep through them

Mix of complexity levels

Goal: compound your shipping velocity

Expected success rate: 60-80% for well-scoped specs

Do not skip phases. If you try to start with a full-night run on 5 specs, you will wake up to a mess and conclude that overnight runs don’t work. They do work, but only after calibration. That calibration happens in nap runs where failures are cheap.

Same Backlog, Two Approaches

You have 6 specs from launch feedback. Normal work week: 2 hours/day × 5 days = 10 hours of build time. You’d get through 4 specs and push the last 2 to next week.

Without overnight runs:

Week 1: Build 4 specs during daytime work sessions. Users wait. Week 2: Finish the last 2 specs. Deploy everything. Users have been waiting 2 weeks. Total user-visible shipping time: 2 weeks.

With overnight runs (after calibration):

Monday evening: Configure a half-night run for 2 simple specs, sleep. Tuesday morning: Review, both shipped, merge, deploy, 10 min of your morning. Tuesday: Build 1 medium spec during your 2-hour window. Tuesday evening: Configure a half-night run for 1 medium spec + 1 simple spec. Sleep. Wednesday morning: Review. Medium spec stuck, simple spec shipped. Merge simple, rewrite medium spec. Wednesday: Build 1 spec during the day. Wednesday evening: Retry medium spec overnight with updated context. Thursday morning: Shipped. Deploy everything.

Total user-visible shipping time: 4 days. Same 6 specs. Same quality. 3x faster end-to-end because the agent worked while you slept.

Notice the ratio: maybe 60% of overnight runs shipped successfully. Some required fixes. That’s realistic. The win isn’t 100% automation — it’s compressing a 2-week cycle into 4 days through incremental progress at night.

Common Failure Modes (And What They Mean)

“The agent keeps making the same mistake.” The spec is ambiguous in a specific way. The agent picks one interpretation, but it doesn’t work; it tries the same interpretation again. Fix: add explicit guidance in the spec about which interpretation to choose.

“The agent touched files I didn’t expect.” The spec referenced something tangentially that the agent followed as a lead. Fix: add explicit “do not modify” clauses to the Constraints section.

“The agent installed a new dependency.” Your spec didn’t forbid it. Fix: add to kill conditions for every overnight run: “abort on any new dependency.”

“Tests were passing, but the code is wrong.” Your tests don’t cover the thing the agent got wrong. This isn’t the agent’s fault — it’s a test coverage gap. Either add tests or add verification steps to the spec’s Acceptance section.

“Nothing happened.” Pre-flight check probably failed. Check /.night-shift/ralph.log for the error. Most common: dirty git state detected after a commit you made between running the skill and going to bed.

“The run timed out, but no progress was made.” The first iteration hit a blocker that the agent couldn’t solve and just kept retrying. Fix: tighter kill condition on “same error × 3” or lower iteration cap.

Common Objections (And Why They’re Wrong)

“This feels too risky. What if it breaks production?”

It can’t. The loop runs on a feature branch, never on main. The kill conditions abort on any scope violation. Your pre-sleep git checkpoint lets you reset any damage in 5 seconds. The worst case is “wasted agent time” — not “broken production.”

“I’d rather just hire a contractor for $X/hour.”

For well-scoped bug fixes and small features, a contractor charges $50-150/hour. An overnight run using Claude costs maybe $2-10 in API calls. Even with 40% of your runs succeeding (after calibration), the cost per shipped feature is still 10-20x cheaper than contractor work. The trade-off is: you spend your morning reviewing and fixing failures instead of having a contractor handle them end-to-end. Different tradeoff, same outcome.

“I don’t sleep 8 hours a night.”

Neither do most developers. Overnight runs work for any unattended period. 4-hour half-nights are common. Some developers run them during long meetings or workout sessions. “Overnight” is the extreme — autonomous runs work for any chunk of time you won’t be at your keyboard.

“Ralph is too much set up. Can’t Claude Code just do this natively?”

Yes, Claude Code has native /loop and /schedule commands. The references/ralph-integration.md The file covers both options. Ralph has more battle-tested kill conditions via shell hooks, but Claude Code’s native features are simpler. Use whichever fits your workflow. The skill’s guardrails and review process work with either.

“What if I want the agent to work during the day too?”

Then you’re past overnight runs. You want Dorothy (from Issue 09’s parallel-tools.md) or a continuous orchestration layer. Night-shift is specifically about the unattended window. Beyond that, you’re building an always-on agent workforce — different problem, different tools.

Here’s what you learned today:

Your agent can work 56+ hours a week that you’re currently throwing away. The math is simple: you sleep, the agent doesn’t. The hard part is building enough trust to let it work unsupervised.

Autonomous runs fail more than any other technique in this series. First runs succeed maybe 30-40% of the time. After calibration (5-10 runs), the success rate climbs to 60-80%. Expect failure. Learn from each one.

The

night-shiftSkill handles the rituals, not the loop itself. Ralph (or Claude Code’s/loop) runs the loop. The skill adds the pre-sleep pre-flight check, guardrail generation, and morning review—the human moments when autonomous runs actually succeed or fail.Start with nap runs, not overnights. 1 spec, 1 hour, during the day. Build trust incrementally. Phase 1 → Phase 2 → Phase 3 over several weeks.

Guardrails are more important than the loop. Ralph already handles loops well. The risk is an unbounded scope. Good guardrails abort the loop at the first sign of drift. Your

references/guardrails.mdbecomes your project-specific safety net.Morning review is not optional. Never auto-merge overnight work. Every run needs a structured review — outcome categorization, diff verification, and cleanup of failed work. The skill handles the structure; you make the decisions.

The Series Is Complete

Ten issues ago, your problem was overthinking: you had ideas and no output. Your agent didn’t know your project, and your specs were vague. You built features sequentially and deployed rarely. You launched never; the backlog grew while your shipping velocity stayed flat.

A tutorial teaches you to do something once, but a system compounds. Every spec you write gets better because you’ve seen what makes specs fail. Every skill you install adds a new deterministic capability that survives across sessions. Every overnight run teaches your guardrails something about your specific codebase.

Six months from now, you’ll be shipping features faster than you thought possible — not because you got more disciplined, but because your system got more capable.

The goal was never “learn AI tools.”

The goal was: stop being stuck, turn the ideas in your head into things real users are using. Ship. Keep shipping. Build the habit. That’s what this system does when you actually run it.

What to Do This Week

Not next quarter, this week.

Tonight: install the

night-shiftskill and set up Ralph. 15 minutes.Tomorrow morning: run your first nap run. 1 simple spec, 1 hour, during your lunch break. Expect it to fail. That’s fine. Learn.

Tomorrow evening: try again. Tighter guardrails, clearer spec. Maybe it works. Maybe it doesn’t. Either way, you learned more about your specific codebase.

End of the week: attempt your first real overnight run. 1-2 specs, 4-hour cap. Wake up to a structured report.

Next week: adjust guardrails based on what you saw. Update

references/guardrails.mdwith project-specific patterns. Try again.

After a month of this, you’ll have a working overnight practice. After three months, you’ll wonder how you ever built during daytime hours.

Final Note

You have everything you need: the skills are installed, the specs are in place, and the pipeline works end-to-end. No more issues in this series will arrive in your inbox.

What comes next depends on you.

You can treat this as content you consumed and move on; that happens. Most people who read a 10-issue series don’t actually build the system. They read, nod, feel informed, and go back to overthinking. If that’s you, that’s fine — but be honest about it.

Or you can use what’s here. Tonight, install the last skill; tomorrow, run your first nap run. This week, ship something overnight. Next week, ship more. Keep the loop running, keep the specs coming, keep the shipping habit alive.

We built this because we saw too many competent builders stuck between “I have an idea” and “real users are using it.”

Technology wasn’t the problem.

The gap was operational: how to turn scattered ideas into a system that consistently produces shipped work. That’s what these 10 issues give you.

Use it.

We’ll be writing more, future series, one-off deep dives, and occasional tactical breakdowns. But the core system stops here. Everything else we publish will be additive; you don’t need more lessons. You need to run the system you already have.

Ship something this week, then ship something else next week, then keep going.

Thanks for building with us.

— Ale & Manuel

PS. If this series changed how you ship, we'd love to know. Reply to any issue, or share it with a developer who's still stuck on their first side project.

And whenever you are ready, there are 2 ways I can help you:

1. AI Side-Project Clarity Scorecard (Discover what’s blocking you from shipping your first side-project)

2. NoIdea (Pick a ready-to-start idea created from real user problems)